Product & Leadership

Discovery Debt

What teams get wrong about the most consequential phase in product development

Feb 2025 · 10 min

Sprint planning, week six. An engineer raises a question about a data dependency nobody anticipated. The designer isn't sure — it wasn't in scope when research was conducted. The product owner escalates. Three days pass. When the answer finally arrives, it changes the acceptance criteria on four tickets. Two need to be rewritten. One goes back to design. The sprint slips.

This moment was avoidable. Everything that caused it was discoverable in week one.

The frustrating part is that this team ran a discovery phase. They did the research. They produced the artefacts. They had a plan before development started. And they still ended up here — in week six, paying for a decision that should have been made in week one.

The problem wasn't that they skipped discovery. It was that they ran it incomplete.

What Discovery Is Actually For

Discovery is not a research phase. It is not a box to tick before the real work begins. It exists to answer one question — and only one — before a single line of code is written or a pixel placed in a production file:

Are we solving the right problem, for the right people, within the real constraints of this organisation and its technology — and does the solution we're proposing actually move the business outcome we care about?

Everything else — the personas, the journey maps, the insight decks, the workshop outputs — is only valuable insofar as it contributes to answering that question. When it doesn't, it's activity masquerading as progress.

Most discovery engagements produce activity. Very few produce the answer.

The Shortcut Everyone Takes

Here is the pattern that plays out in organisations of every size, across every industry: OKRs get defined, a backlog gets created, and delivery begins. The middle step — the one where you actually interrogate whether your proposed solutions address the opportunities that lead to those outcomes — gets compressed, reframed as a sprint zero, or skipped entirely.

It feels defensible. The backlog traces back to business objectives. The team has alignment. Delivery can start. What's missing is invisible until it isn't.

The shortcut

OKRs to backlog, skipping the middle.

Every item on the backlog has a rationale. None of it has been validated. None of it has been stress-tested against technical constraints or organisational reality. It is a confident list of unvalidated assumptions, and it will be delivered on time and on budget into a product nobody uses quite the way anyone expected.

The cost of this shortcut doesn't appear in discovery. It appears in sprint planning, in backlog refinement, in the questions engineers ask that nobody can answer. It appears as scope creep, rework, technical debt of the wrong kind, and delivery timelines that keep moving. Sometimes it appears as a full pivot — a moment where the organisation has to acknowledge that what was built does not do what the business needed it to do.

All of it traces back to a decision made before the project started.

Three Perspectives. All Three Required.

A complete discovery holds three perspectives simultaneously. In practice, most hold one well, one partially, and one not at all.

The human perspective is the most understood. Users, behaviours, needs, friction points, the gap between what people say they do and what they actually do. Design-led teams are trained for this. The methods are well established. This perspective usually gets covered.

The organisational perspective is where it starts to break down. The organisation is not the sum of its people — it is its own entity, with its own processes, systems, incentive structures, political constraints, and capacity to absorb change. Treating it as an extension of the human perspective is a mistake. A solution that works for users can still fail if the organisation cannot sustain it operationally, if it cuts across existing systems in ways nobody mapped, or if the incentives of the business functions it touches point in a different direction.

The organisation deserves to be researched as its own subject. Not as a backdrop to the human story, but as a principal in the design problem.

The technical perspective is almost never present in discovery — because the person who would bring it has not been introduced yet. They are waiting for design to be ready for implementation. By the time they arrive, the decisions that needed their input have already been made.

This is not a staffing oversight. It is a structural assumption about when technical expertise becomes relevant. That assumption is wrong, and the cost of it is paid in every sprint after design hands over.

All three perspectives feed into business strategy. All three feed into delivery. Missing any one of them produces a discovery that looks complete from the outside and isn't.

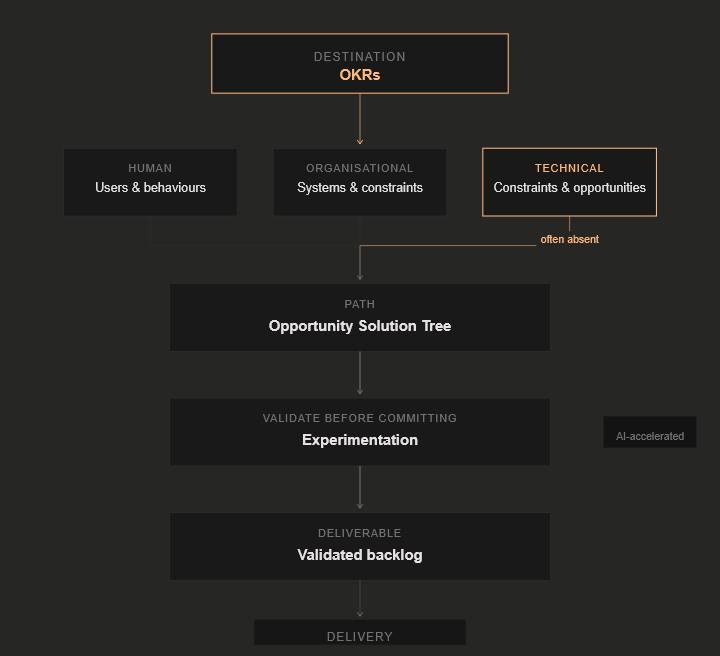

The Framework That Holds All Three

The integration of Objectives and Key Results with the Opportunity Solution Tree is not a new idea. What is consistently underestimated is how much the OST depends on technical fluency to function properly.

OKRs set the destination. They define what success looks like for the business in measurable terms. They answer the question: where are we trying to get to, and how will we know when we're there?

The Opportunity Solution Tree finds the path — a strategic framework that branches from desired outcomes through opportunities to experiments to backlog items, mapping what is actually possible across the human, organisational, and technical landscape. Solutions are proposed, experimented with, and validated before they enter the backlog. Only what survives experimentation gets built.

The insight is in the integration. The OST is a design and technology-driven effort. It requires someone who can investigate technical opportunities and constraints with the same rigour that a designer brings to investigating human needs. Without that, the opportunity space only gets mapped from two of three directions. Technical constraints get discovered in sprint six. Integration points that could have unlocked entirely different solution directions never get identified. The opportunity tree grows in the wrong soil.

The OST maps the opportunity space. Without technical fluency in the room, you are only mapping half of it.

Experimentation — the step that sits between opportunity and backlog — has historically been where this framework broke down in practice. Building something testable took time and budget that most discovery phases did not have. Teams skipped the step, or ran too few experiments to be confident, and solutions entered the backlog on the basis of assumption rather than evidence.

AI changes this calculus. Rapid prototyping of coded samples — functional, testable, close enough to real that users and stakeholders can respond to them — is now a fraction of the cost and time it was two years ago. The experimentation layer that was theoretically correct but practically difficult is now practically achievable as a standard step. The excuse for skipping it has weakened considerably.

What AI does not change is the judgment required to design the right experiments, interpret what the results mean, and decide whether a solution has genuinely de-risked the decision it was testing. That judgment still requires the human capability the framework depends on. AI accelerates the execution. It does not replace the thinking.

The output of a discovery run this way is not a research deck. It is a validated, outcome-linked, technically de-risked backlog. That is the deliverable. That is what the organisation is buying when it resources discovery properly.

Why the Right Capability Keeps Arriving Too Late

Three forces conspire to keep technical expertise out of the discovery room.

Cost sensitivity. Discovery is where budget feels optional. The argument for keeping the team lean is intuitive: we are not building anything yet, so why do we need a full team? The flaw in this reasoning is that discovery is precisely where the most expensive decisions in the programme are made — or made by default when nobody makes them deliberately. The cost of under-resourcing discovery does not appear on the discovery budget. It appears in delivery, by which point it is too late and too expensive to trace back to its origin.

Premature deadlines. Delivery timelines are often fixed before anyone understands what the problem actually requires. Discovery gets compressed to fit a schedule that was never realistic, which means the experimentation layer gets cut first, the technical perspective gets deferred, and the backlog gets populated with assumptions that feel like decisions.

Role defaults. Organisations staff product programmes by title. Architect. Tech lead. Senior developer. These roles have conventional entry points in the delivery sequence — after design, before or during build. The person who should be present in the discovery room does not have a standard title. They do not appear on the conventional programme plan. So they do not appear in the room.

The result is not malicious. It is structural. The system is optimised for a model of product delivery that separates design thinking from technical thinking and sequences them rather than running them in parallel. That model is expensive, and most organisations are paying the cost without knowing it.

What the Right Person Actually Does

The gap is not filled by involving engineers earlier, though earlier is better than later. It is filled by involving the right kind of practitioner — one whose role in discovery is not to review design decisions for technical feasibility, but to conduct technical research in parallel with human and organisational research from the outset.

In practice, this means mapping the technical landscape the way a designer maps a user journey. Identifying integration points, data assets, existing constraints, and technical debt as inputs to the opportunity space — not as obstacles encountered after solutions have been chosen. Facilitating design synthesis with technological insight rather than waiting to respond to design output. Translating between design intent and technical reality in both directions, fluently, without the loss that accumulates at every handoff.

It also means being capable of operating the OKR and OST framework across all three perspectives — holding the human, organisational, and technical dimensions simultaneously rather than delegating them to separate workstreams that reconvene when something breaks.

The title attached to this capability matters less than the capability itself. The argument for why this combination is rarer and more valuable than any single discipline alone is made in full in The Interpreter Role Nobody Hires For.

The Real Cost of Getting This Wrong

The sprint planning moment at the start of this article did not cost three days. It cost three days visible and everything upstream that produced it invisible — the undocumented assumption that became a ticket, the design direction that was never stress-tested, the technical constraint that nobody knew to investigate, the experiment that was never run because the timeline had no room for it.

Discovery properly resourced — with all three perspectives present, a framework that connects business outcomes to validated solutions, and the technical fluency to map the full opportunity space — is the cheapest risk mitigation available to any product programme.

The alternative is not a shorter, cheaper project. It is the same project, with the same problems, paid for twice. Once in delivery. Once when the organisation asks why what was built does not do what the business needed it to do.

The answer to that question was always in discovery. It just was not there when it needed to be.

The right problem.

The right partnership.

Open to the right full-time leadership roles and consulting partnerships. If the problem sits at the intersection of design, data, and technology — let's talk.